Quick Start Guide: Optimal Feature Selection for Classification

This is a Python version of the corresponding OptimalFeatureSelection quick start guide.

In this example we will use Optimal Feature Selection on the Mushroom dataset, where the goal is to distinguish poisonous from edible mushrooms.

First we load in the data and split into training and test datasets:

import pandas as pd

df = pd.read_csv(

"agaricus-lepiota.data",

header=None,

dtype='category',

names=["target", "cap_shape", "cap_surface", "cap_color", "bruises",

"odor", "gill_attachment", "gill_spacing", "gill_size",

"gill_color", "stalk_shape", "stalk_root", "stalk_surface_above",

"stalk_surface_below", "stalk_color_above", "stalk_color_below",

"veil_type", "veil_color", "ring_number", "ring_type",

"spore_color", "population", "habitat"],

)

target cap_shape cap_surface ... spore_color population habitat

0 p x s ... k s u

1 e x s ... n n g

2 e b s ... n n m

3 p x y ... k s u

4 e x s ... n a g

5 e x y ... k n g

6 e b s ... k n m

... ... ... ... ... ... ... ...

8117 p k s ... w v d

8118 p k y ... w v d

8119 e k s ... b c l

8120 e x s ... b v l

8121 e f s ... b c l

8122 p k y ... w v l

8123 e x s ... o c l

[8124 rows x 23 columns]from interpretableai import iai

X = df.iloc[:, 1:]

y = df.iloc[:, 0]

(train_X, train_y), (test_X, test_y) = iai.split_data('classification', X, y,

seed=1)

Model Fitting

We will use a GridSearch to fit an OptimalFeatureSelectionClassifier:

grid = iai.GridSearch(

iai.OptimalFeatureSelectionClassifier(

random_seed=1,

),

sparsity=range(1, 11),

)

grid.fit(train_X, train_y, validation_criterion='auc')

All Grid Results:

Row │ sparsity train_score valid_score rank_valid_score

│ Int64 Float64 Float64 Int64

─────┼──────────────────────────────────────────────────────

1 │ 1 0.530592 0.883816 10

2 │ 2 0.666962 0.958933 9

3 │ 3 0.852881 0.97677 8

4 │ 4 0.886338 0.989837 6

5 │ 5 0.8786 0.989776 7

6 │ 6 0.918052 0.999586 4

7 │ 7 0.921551 0.999586 5

8 │ 8 0.930723 0.999783 1

9 │ 9 0.933428 0.999783 2

10 │ 10 0.933968 0.999755 3

Best Params:

sparsity => 8

Best Model - Fitted OptimalFeatureSelectionClassifier:

Constant: -0.102149

Weights:

gill_color=b: 1.30099

gill_size=n: 2.15205

odor=a: -3.56147

odor=f: 2.41679

odor=l: -3.57161

odor=n: -3.58869

spore_color=r: 6.1136

stalk_surface_above=k: 1.56583

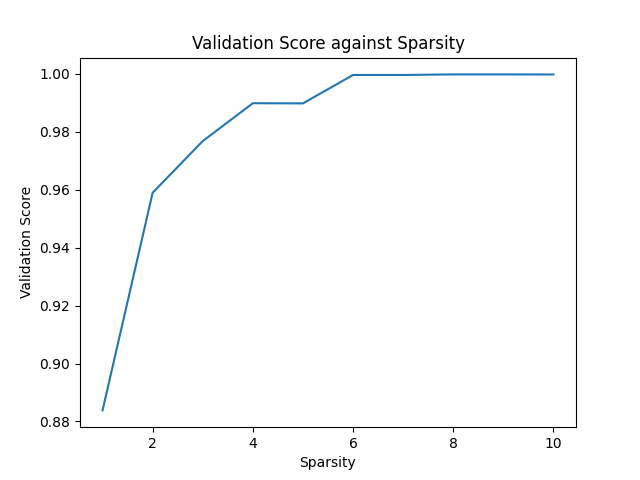

(Higher score indicates stronger prediction for class `p`)The model selected a sparsity of 8 as the best parameter, but we observe that the validation scores are close for many of the parameters. We can use the results of the grid search to explore the tradeoff between the complexity of the regression and the quality of predictions:

grid.plot(type='validation')

We see that the quality of the model quickly increases with as features are adding, reaching AUC 0.98 with 3 features. After this, additional features increase the quality more slowly, eventually reaching AUC close to 1 with 6 features. Depending on the application, we might decide to choose a lower sparsity for the final model than the value chosen by the grid search.

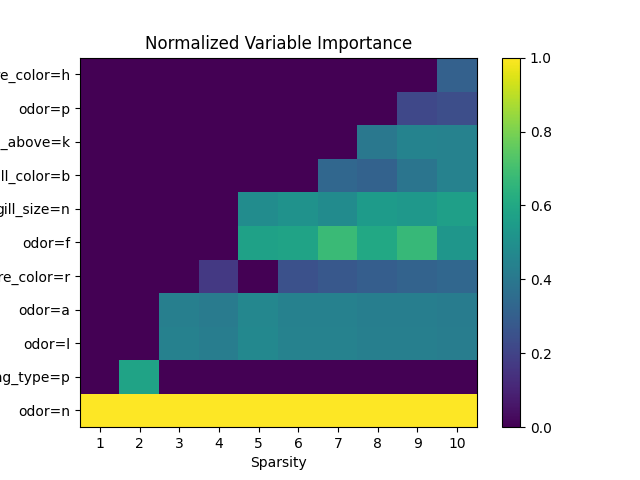

We can see the relative importance of the selected features with variable_importance:

grid.get_learner().variable_importance()

Feature Importance

0 odor=n 0.247412

1 odor=f 0.148001

2 gill_size=n 0.138891

3 odor=l 0.109303

4 odor=a 0.105547

5 stalk_surface_above=k 0.098680

6 spore_color=r 0.077806

.. ... ...

105 stalk_surface_below=s 0.000000

106 stalk_surface_below=y 0.000000

107 veil_color=n 0.000000

108 veil_color=o 0.000000

109 veil_color=w 0.000000

110 veil_color=y 0.000000

111 veil_type=p 0.000000

[112 rows x 2 columns]We can also look at the feature importance across all sparsity levels:

grid.plot(type='importance')

We can make predictions on new data using predict:

grid.predict(test_X)

array(['e', 'e', 'e', ..., 'p', 'p', 'e'], dtype=object)We can evaluate the quality of the model using score with any of the supported loss functions. For example, the misclassification on the training set:

grid.score(train_X, train_y, criterion='misclassification')

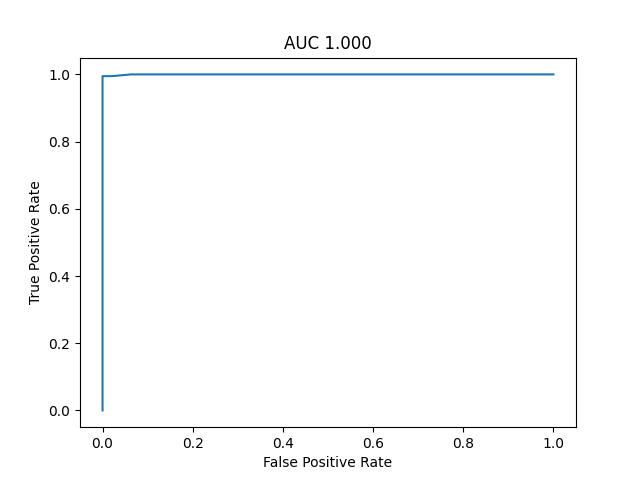

0.9982416036574644Or the AUC on the test set:

grid.score(test_X, test_y, criterion='auc')

0.9997835249688102We can plot the ROC curve on the test set as an interactive visualization:

roc = grid.ROCCurve(test_X, test_y, positive_label='p')

We can also plot the same ROC curve as a static image:

roc.plot()